NVIDIA's $20 Billion Bet: How Groq 3 LPX Is Reshaping the Future of AI Inference

The silicon story behind one of the largest AI infrastructure deals of the decade—and why heterogeneous inference is now center stage.

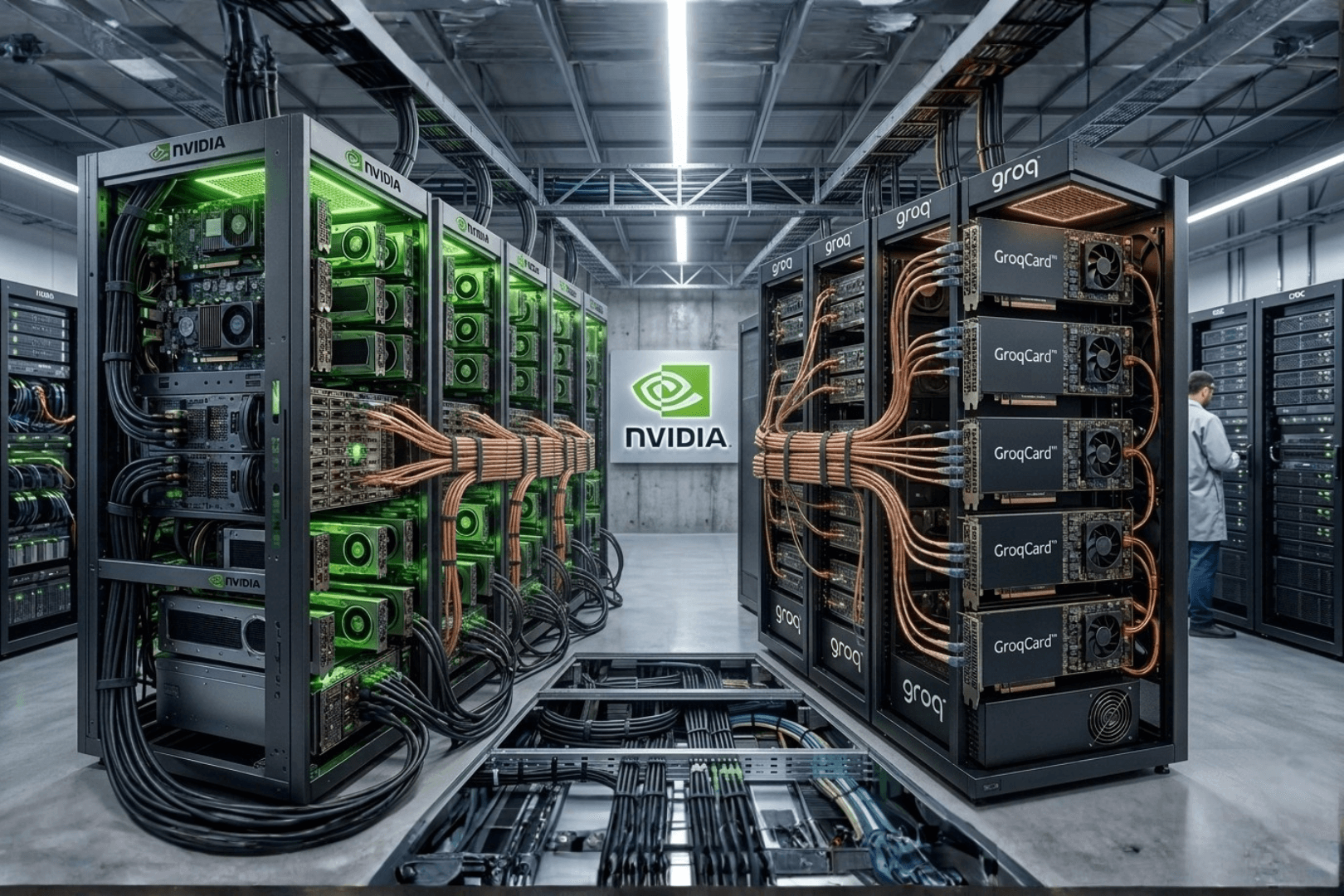

The Deal That Shocked Silicon Valley

In late December 2025, NVIDIA completed a roughly $20 billion acquihire of AI inference company Groq, bringing most of the engineering organization in-house and licensing the technology behind its LPU (Language Processing Unit) dataflow engines. The structure avoided a conventional buyout narrative that might have dragged on and drawn heavier regulatory attention; the move was fast, targeted, and visibly urgent.

For months afterward, NVIDIA said little in public about the strategic rationale. At GTC 2026 in San Jose, that changed. Jensen Huang introduced the NVIDIA Groq 3 LPX—the first product line clearly rooted in that transaction—now positioned as a first-class citizen on the NVIDIA Vera Rubin platform. The question of why Groq mattered started to read as an architecture answer, not just a headline.

Who Is Groq — And What Is the LPU?

Jonathan Ross, an engineer who helped shape Google's first TPU, founded Groq with a narrow mission: build an inference chip that wins on latency and predictability, not on reusing GPU assumptions. The result was the LPU (Language Processing Unit)—a fully scheduled, deterministic processor built around a simple bet: place very large amounts of on-chip SRAM next to where model weights live, and cut the memory-bandwidth path that often bottlenecks GPU-based LLM serving.

As generative AI scaled, that architecture showed up in benchmarks and customer stories as genuinely fast for token generation. Other vendors pursued SRAM-heavy or wafer-scale angles too; from NVIDIA's perspective, a wave of inference-focused startups was competing for the same operational budgets as datacenter GPUs. The Groq deal can be read as a decision to internalize the most credible alternative stack rather than let it mature independently beside CUDA.

The Groq 3 LPX: Born From a $20 Billion Deal

The Groq 3 LPX (internally referenced as LP30) is the third generation of the LPU architecture, now co-designed with NVIDIA and manufactured on Samsung 4nm. NVIDIA indicated at GTC 2026 general availability in the second half of 2026, with Q3 as the most likely window.

At rack scale, the story NVIDIA tells is built around 256 interconnected Groq 3 LPU accelerators per rack and a set of figures aimed at IO-bound inference workflows:

- 40 PB/s on-chip SRAM bandwidth aimed at feeding compute without memory stalls dominating the timeline.

- 640 TB/s rack-scale chip-to-chip communication through high-radix interconnects.

- Deterministic, compiler-orchestrated execution to avoid dynamic scheduling jitter and surprise tail-latency spikes.

- NVIDIA positions LPX at up to 35× higher inference throughput per megawatt versus GPU-only inference for relevant decode-heavy slices of the stack, and a larger revenue surface for trillion-parameter serving scenarios.

The architectural point is not that GPUs are "bad"—they remain unmatched for massive parallel training, prefill, and attention-heavy phases. The claim is that autoregressive decode is a different program shape: more sequential, more sensitive to tail latency under concurrency. The LPU is optimized for that slice, with predictable timing that large GPU clusters have historically struggled to guarantee at the same operational simplicity.

A Heterogeneous Masterpiece: LPX Meets Vera Rubin

NVIDIA's pitch is not a sidecar FPGA bolted to a GPU box—it is a co-designed heterogeneous inference engine where each processor class owns the phases it is best at.

| Workload | Hardware |

|---|---|

| Training | Vera Rubin NVL72 (Rubin GPU) |

| Prefill & attention | Vera Rubin NVL72 (Rubin GPU) |

| Long-context processing | Vera Rubin NVL72 (Rubin GPU) |

| Low-latency FFN / MoE decode | Groq 3 LPX (LPU) |

| Premium real-time token generation | Groq 3 LPX (LPU) |

The glue layer is NVIDIA Dynamo, software that classifies requests in real time, routes prefill and attention work to Rubin GPUs, and steers latency-sensitive decode to LPUs—what NVIDIA brands the AFD (Attention–FFN disaggregation) loop.

At GTC 2026, NVIDIA leadership emphasized that integrating LPU/LPX with Rubin for decode optimization is a primary focus going to market—not a side experiment. In parallel, the earlier Rubin CPX concept—a cost-optimized monolithic GPU variant sketched for inference—has been set aside in favor of this LPX-centric path.

The Vision: Speed-of-Thought Computing

The product narrative extends beyond faster chat UIs. LPX is framed for agentic AI: systems that plan, call tools, and iterate continuously, where human-perceived pauses break trust.

When generation approaches on the order of 1,000 tokens per second per user, the interaction model shifts from turn-taking to something closer to a live thinking partner. That in turn makes multi-agent orchestration more practical—spawning sub-agents, cross-checking reasoning, and converging in milliseconds rather than seconds.

Huang also sketched a portfolio view in which low-latency, premium-priced token generation could represent on the order of one quarter of AI cluster compute—a high-margin slice NVIDIA intends to capture with LPX-class silicon.

Why This Was Existentially Necessary for NVIDIA

Treating the Groq transaction as a vanity purchase misreads the market. NVIDIA's deepest moat was built on training—years of datacenter spend on massive parallel jobs. The industry has simultaneously moved into an inference era where latencies, cost per token, and serving economics dominate P&L.

| Training | Inference | |

|---|---|---|

| Priority | Raw throughput | Low latency |

| Workload shape | Parallel batches | Sequential, real-time decode |

| Memory pattern | HBM bandwidth | On-chip SRAM |

| Typical fit | GPU (NVIDIA) | LPU / specialized accelerators |

An independent Groq scaling enterprise inference would have chipped away at a fast-growing segment without ever needing to win a head-to-head CUDA fight. Buying Groq both removes that vector and adds what NVIDIA now presents as the strongest dedicated decode fabric in its portfolio—merged with Vera Rubin as a single reference architecture story.

The Bottom Line

Groq 3 LPX is best understood as the hardware expression of a strategic pivot: even the default platform for AI training recognized a gap in the inference story large enough to justify a headline-grabbing deal.

For enterprises and builders, the practical implication is that one-size-fits-all GPU inference is giving way to heterogeneous systems—specialized silicon for specialized phases, orchestrated by software that understands request types and SLOs. The competitive question is less who ships the largest single chip and more who ships the most coherent full stack. With Vera Rubin plus Groq LPX on the same roadmap, NVIDIA is asking the market to grade it on that wider scorecard.

Planning inference or agentic AI on your own stack?

We help teams navigate model serving, hardware choices, and integration—from heterogeneous datacenter designs to product roadmaps. Talk to our team for strategy and implementation support.